Access to all articles, new health classes, discounts in our store, and more!

What the Healthiest Human Diets Have in Common

I once spent a summer living with a family of wild Arctic wolves less than 500 miles from the North Pole, on remote northern Ellesmere Island. My purpose was to conduct wolf behavioral research in this unique environment, where wolves were not hunted. I was in the company of the world’s foremost scientific expert on the wolf, Dr. L. David Mech, who was also a longtime friend and mentor in the field of wildlife biology, where I worked for some years.

Ellesmere Island hosted numerous archaeological sites that were hundreds or even thousands of years old. There were several Thule sites near our encampment, as well as those of a much earlier unidentified culture that, unlike the Thule, specialized in hunting land mammals. The Thule (predecessors of the Inuit) specialized in hunting seal, walrus, and whales and fished for much of what they consumed, particularly during the winter months where they ventured out onto the polar ice. In summer, they maintained shoreline villages, marked with stone circles where animal hides were often stretched over whale bones for shelter. The bones of animals that had been hunted and fished were strewn around these encampments and were generally quite well preserved.

Before I arrived on Ellesmere, I had been eating lots of salads and other vegetables, some whole grains, legumes, potatoes, pasta, etc. – all that stuff the nutritional authorities tell you to eat – along with some lean meats, and I had been doing a bunch of juicing. I was concerned about what would happen to my health since I wouldn’t get a lot of fresh produce during the months that I would spend there. But once I arrived, something unexpected happened: I began to crave fat, possibly for the first time in my life. I found myself sitting on the tundra, well bundled against the cold, munching on salami, cheese, nuts, and nut butters – with almost no veggies, save the onions we had brought along to cook with. By the end of the summer, after eating all that fat and barely exercising, I had lost roughly 25 pounds. That simply wasn’t supposed to happen after eating tons of fat, according to everything I had ever been told about diet.

Looking out over the vast tundra, I realized that this landscape had been occupied for probably 10,000 years by innumerable human groups and cultures. Whatever these robust and hardy ancient people ate to nourish themselves, it certainly wasn’t salads. Constant permafrost made cultivating anything pretty much impossible, and there just wasn’t a lot of wild, green forage that would be considered edible for humans. All of this opened the door, if you will, to what I now think of as my “Primal” perspective.

Shortly after arriving home, I was serendipitously introduced to the work of Dr. Weston A. Price, and it was extremely eye opening. Suddenly, things started to make more sense. I knew I was very close to finding the universal human dietary foundation that I had always sought. And once I realized how much could be learned through the logical investigation into what our ancestors ate, I began to dig back even further than Price had. I wanted to find out what our most ancient prehistoric ancestors had to teach us from the longest stretch of our evolutionary journey.

It seemed logical and rational to imagine that the selective pressures we faced as an early evolving species would have been largely responsible for shaping our dietary choices, and in turn those choices would have shaped our physiological makeup and established our most basic nutritional requirements. Bingo! That’s what led me down the path even further back than the post-agricultural Neolithic time period – which most versions of the “Paleo diet” are based on – to the Ice Age.

A striking aspect of the Ice Age (other than the inhospitable climate) was the vast array of Pleistocene megafauna, such as wooly mammoths and mastodons, that we coexisted with from 2.6 million years to just about 10,000 years ago. These incredible beasts formed a major, highly meaningful focus of our diets for the better part of our evolutionary history – a fact that never really gets discussed much. The thing to understand about these massive animals was their almost equally massive fat content, which we coveted.

Extrapolating from the fat content of elephants, scientists have estimated that wooly mammoths and mastodons carried more than 50% of their body composition as fat and easily had about four inches of subcutaneous fat beneath their hides. This was in addition to their organ and tissue fats, including those found in their brains, tongues, and marrow, which we also would have gobbled up.

In North America, prehistoric humans were able to hunt four species of elephant, giant sloths, bear-sized beavers (Castoroides ohioensis), bison twice the size of today’s, woolly rhinos, and much more. Prior to the end of the last period of glaciation, roughly 10,000 years ago, we had 120 more species of megafauna throughout the world compared to what exists today. There were 40 additional species in North America alone. That’s a lot of fatty meat!

The thing to understand is this: Fat, to our unique human physiological makeup, literally means survival – and survival trumps everything else when it comes to what your body prioritizes. As Ice Age beings, we were designed to weather not just the cold, but extremes of climate (cold and heat, drought, wildfires, floods, huge storms, and the effects of widespread volcanic activity). We became human, in essence, by surviving disasters. There would have been time periods and places where plant life – particularly edible plants – would have been pretty scarce and impractical as a meaningful source of calories. Nutrient density would have been the most important dietary consideration – and there is nothing more nutrient and calorie dense than meat, and especially the fat that comes with it.

As understood by most anthropologists today, it was likely our dependence on the meat and especially the fat of the animals we hunted that not only allowed us to survive but also resulted in the extremely rapid enlargement of the human brain, something that is unique and truly unprecedented in all of nature. Our brain serves, more than any other human characteristic, to define us as a species. In other words, dietary animal fat is quite literally central to what made us human in the first place!

Here is another key factor to consider: Optimizing brain function is not just about eating enough dietary fat. Apart from the quality, sourcing, and composition of the fat, we need to take into account the potentially critical value of ketones (the water-soluble energy units of fat) – in other words, dietary fat in the absence of dietary sugar and starch.

Dr. George Cahill, Jr., renowned expert on the metabolism of starvation and ketosis, stated unequivocally, “Brain use of BOHB [beta hydroxybutyrate – i.e., ketones], by displacing glucose as its major fuel, has allowed man to survive lengthy periods of starvation. But more importantly, it [ketosis] has permitted the brain to become the most significant component in human evolution.”

Regarding our phenomenal increase in brain size, researchers have stated, “Much of this increase occurred within the period following 800,000 years ago, during which time mean endocranial volume in [our genus] Homo increased by approximately 70 ml per 100,000 years.” That’s a lot! In fact, it is a rate of rapidly increasing brain size and sophistication that is unprecedented in nature. And of all the primates in the world, we humans have by far the most voracious appetite for dietary fat and are best designed to consume it – hands down.

Our brains are constructed from the fats we supply them with through our diet. The two fatty acids most responsible for characterizing our unique human cognition – the 20- and 22-carbon fatty acids, arachidonic acid (AA) and docosahexaenoic acid (DHA) – are found exclusively within our food supply in animal source fats.

By the time Homo erectus emerged, about two million years ago, hunting and scavenging dominated our dietary economy. The more fat we ate as we were evolving, the bigger our brains grew and the more capable we became of successfully hunting these huge animals.

By the way, I have personally observed wolves going after their prey. What distinguishes them from us as carnivores is that they typically go after the sick, the old, the weak, and the very young, because these are the animals that are easiest for them to catch – most of which have significantly less body fat. Our ancient ancestors, on the other hand, selectively hunted the healthiest (i.e., fattest and sassiest) animals in any given herd, even though these animals were far more difficult and dangerous to procure.

An article authored in part by Israeli anthropologist Miki Ben-Dor offers a similar hypothesis. He proposes that prehistoric humans selectively hunted for fat, first and foremost. One of the excellent points that he makes – and that counters all the emphasis on lean meat by many in the Paleo crowd – concerns what can be referred to as our physiological protein ceiling.

Ben-Dor states: “It is known that diets deriving more than 50% of the calories from lean protein can lead to a negative energy balance, the so-called ‘rabbit starvation’ due to the high metabolic costs of protein digestion, as well as a physiological maximum capacity of the liver for urea synthesis.… A recent review of the literature ([57]:888) recommends a long-term maximum protein intake of 2 gr/kg body weight/day.”

The obvious solution to this protein “ceiling” is the abundant consumption of fat with our dietary protein, to dilute it and facilitate its safe and effective utilization.

But what about the so-called cooking hypothesis? There is a popular claim that cooking was “the thing” that ultimately made us human. This hypothesis was basically supposed to be an answer to our human inability to process large amounts of fiber into energy.

I’ll just quote Josh Snodgrass, William Leonard, and Marcia Robertson – three of the most accomplished and respected anthropologists specializing in this question – from a paper of theirs back in 2009: “Although cooking is clearly an important innovation in hominid evolution that served to increase dietary digestibility and quality, there is very limited evidence for the controlled use of fire by hominids prior to 1.5 Ma [million years ago]. The more widely held view is that the use of fire and cooking did not occur until considerably later in human evolution, probably closer to 200-250,000 years ago, although possibly as early as 400,000 years ago. In addition, nutritional analyses of wild tubers used by modern foragers suggest that the energy content of these resources is markedly lower than that of animal foods, even after cooking.”

And a more recently published study suggests that our ability to make use of fire as a cooking tool at will was probably much more recent – taking place anywhere from 75,000 to 100,000 years ago.

Also, various nitrogen isotope studies confirm low plant consumption in the late Paleolithic era, even though cooking was already well-established. In any case, by 200,000 years ago, our brains were already fully modern, if not even a bit larger than they are now. And we never needed cooking in order to reliably make use of dietary fat. Cooking did not make us human, and neither did grains or potatoes. Dietary fat did.

I was recently gobsmacked by the realization that, in virtually all the cave paintings worldwide depicting animals that we once hunted, the prey animals are typically shown as unnaturally fat. Interestingly, humans (for the most part) are not depicted in this way (see the cave painting at the bottom center of the photo below), and neither are predators.

Cave paintings are now understood as being likely shamanic in nature – in other words, depicting that which is sacred or which prehistoric people may have sought as most desirable. Make no mistake, these were extraordinary artists, fully capable of depicting animals accurately – yet they chose to portray the ones hunted for food as being disproportionately fat. The rational implication here is that this was the most desirable characteristic in the food animals that they hoped to successfully hunt. In looking through hundreds of cave paintings and images of rock art from all over the world, I have found this to be a theme that comes up again and again. And the only human depiction that is occasionally characterized in this manner involves female fertility totems, which tells you a thing or two about the symbolic importance of fat when it comes to successful and healthy reproduction!

We’ve talked about the critical role that nutrient- and energy-dense fats played during the Ice Age. But fat continued to play just as venerated a role in more recent, neo-Paleolithic cultures, including those in places like the arid outback and the tropics. In other words, the climate doesn’t have to be cold or particularly extreme in order for fat to play a critical role in the human diet.

Even in relatively modern times, all traditional cultures revered and coveted sources of dietary fat; for example:

- Plains and Northern Indians used pemmican.

- Lapps, Saami, and Siberians valued reindeer fat.

- Marsh Arabs and Berbers prized camel fat.

- Canadian first nations relished moose fat.

- Coast Salish tribes used oolichan grease.

- The Inuit ate large amounts of seal, walrus, and whale fat.

- Innus (of northeastern Canada) coveted caribou fat.

- Aboriginal people of Australia sought out emu fat.

In fact, if an aboriginal hunter killed something like a kangaroo that was overly lean, they would leave it to rot and find a fatter one.

Additional sources of dietary fat and fat-soluble nutrients in Indigenous cultures have included things like egg yolks, organ meats, insects, grubs, fish heads, and shellfish, as well as some nuts and seeds – so it’s clear that fats have played a very important role in post Ice Age diets!

But it’s not just about macronutrients! These diets were also especially rich in fat-soluble micronutrients – something that I think is often overlooked. Many of these critical fat-soluble nutrients can only be found in animal source foods.

- Vitamin A (retinol): organ meats, fully pastured egg yolks

- Vitamin D3: animal fats (tallow, suet, brains, tongue, marrow, organs)

- Vitamin E-complex: nuts and seeds, grassfed animal fats/meat

- Vitamin K2/MK-4: grassfed animal fats/organ meats, fish eggs, shellfish, fat/oil from certain emus, poultry liver/fat, and grassfed, cultured ghee

- CLA: fully pastured animal fats

- CoQ10: organ meats such as liver, kidney, and heart; beef; oily fish like sardines and mackerel

- L-carnosine: red meat

CLA is one of the most potent anti-cancer nutrients in the human diet (found only in significant amounts in 100% grassfed meat), and L-carnosine – found exclusively in animal source foods – is highly concentrated in brain and muscle tissue (including the heart). Carnosine’s powerful antioxidant effects, coupled with its ability to scavenge both free radicals and damaged protein products, give it unique protective characteristics with the potential to lengthen life span (translation: minimize disease).

The fat-soluble vitamins (A, D3, E-complex, and K2/MK-4), when in their activated form (as found naturally in animal source foods), are able to enter a cell’s nucleus and bind to nuclear receptors, thereby modifying the way genes are transcribed and expressed. These changes in gene expression can in turn encourage or inhibit various internal processes, leading to health or disease.

Getting Back to Evolution

Until 11,600 years ago, we had been evolving as nearly pure meat and fat eaters for 100,000 generations or more – and then everything unexpectedly and cataclysmically changed. The vast ice sheets that covered most of northern North America and extreme South America suddenly vanished. Coastal areas lost whole civilizations under hundreds of feet of seawater. Whether it was fragments of a comet (the most current and likeliest theory at hand), a meteor, or a magnetic reversal paired with a massive solar flare that caused these ice sheets to melt so quickly, it is clear that our world changed abruptly, violently, and decisively. The massive fat-rich megafauna mostly vanished in the blink of an eye. Suddenly we were faced with a critical survival emergency and a need to adapt to rapidly changing circumstances. So we created agriculture and began growing grains.

In the process, we shifted from about a three-hour work day to one where we were working eight-plus hours to obtain a far less nutrient-dense food that we were less adapted to utilize. We clearly suffered poorer health as a result. Once we adopted agriculture, our average life expectancy actually halved compared to that of our prehistoric ancestors, whose primary causes of death were things like infant mortality, accident, and infection. It was at the beginning of the agricultural revolution that we began to develop the “diseases of Western civilization” – metabolic and deficiency-related diseases and cancers.

But there was another noticeable and rather troubling trend that began after we adopted agriculture. As stated by Hawks, “Human populations during the last 10,000 years have undergone rapid decreases in average brain size as measured by endocranial volume or as estimated from linear measurements of the cranium.”2 In fact, Cro-Magnon humans 20,000 years ago had bigger brains than we do today. Let’s just say that evolution may not be moving in the direction we would like to think it is.

Remember, the overall fatty acid composition of our brain, not just its size, matters with respect to its higher functions – with structural 20- and 22-carbon chain fatty acids such as AA and DHA from animal fats accounting for many of our unique cognitive capacities. And when it comes to DHA, if it’s not in your diet, it isn’t in your brain, either. No one gets this from flax oil, chia seeds, sacha inchi seeds, or walnuts! We were designed to get these evolutionarily and cognitively critical omega-3 fatty acids directly from animal source foods.

A study funded by the National Institute on Aging found that people age 70 and older who eat food high in carbohydrates have nearly four times the risk of developing mild cognitive impairment, and the danger rises further with a diet heavy in sugar. But, according to the analysis, “those whose diets were highest in fat – compared to the lowest – were 42 percent less likely to face cognitive impairment, and those who had the highest intake of protein had a reduced risk of (just) 21 percent.”

So fat is clearly at the center of the equation when it comes to your long-term brain health!

In addition, according to findings over the last decade, people today are being diagnosed with disease at much younger ages than their grandparents. Age 30 has become the new 45 in terms of disease onset. And this is not because we suddenly began consuming more animal-based foods and fats! We have been told for decades now to avoid animal source foods and fats, and follow a diet based on carbohydrates – for the first time in all of human history. What have these dietary guidelines done for us?

A study published in May 2015 took a close look at the impact of dietary guidelines – which promoted low-fat and low-cholesterol foods and a higher carbohydrate diet – on the health of US citizens between the years 1965 and 2011.

It found that the rates of obesity and numerous metabolic diseases during that time increased dramatically, while our intake of carbohydrates significantly increased and our intake of dietary animal fats substantially decreased.

The guidelines told us to limit total fat calories to no more than 35% of our caloric intake, limit saturated fats to no more than 10% of our diet (an unprecedented limit in human evolutionary history), and keep protein to between 10-35%. We obediently complied. Where dietary carbs were concerned, the Acceptable Macronutrient Distribution Range (AMDR) was set at 55-65%, with the guidelines stating (without any scientific basis whatsoever) that 45% was the bare minimum “needed” to meet the nonexistent “optimal dietary requirements.” Again, we naively obliged, consuming just over 50% of our diet as carbs – which was much more than enough to set us up for a rampant state of metabolic chaos. It’s worth pointing out that among the major macronutrients—proteins, fats, and carbohydrates – the only one for which there is no scientifically established human dietary requirement is carbohydrates!

It’s also important to point out that all utilizable carbohydrates (sugary or starchy foods) are glucose by the time they hit your bloodstream. So, we need to look far beyond the ill effects of refined sugar when tracing the derangement of human metabolism and health to its source. In fact, it turns out that a bigger problem metabolically than sugar on its own is the combination of sugars/starches and fats. Let’s face it, our ancestors weren’t serving up baked potatoes with their wooly mammoth steaks, and it turns out that there was a very good reason not to!

Dietary cholesterol has been another casualty of this misinformation and disinformation campaign over the last several decades. The truth is that cholesterol is absolutely critical for health. It’s vital for our:

- Brain health

- Neonatal brain development

- Cell receptors

- Myelin

- Bile acids

- Steroidal hormones

- Cellular membranes

- Embryonic development

- Immune function

I worry way more about persons having cholesterol that is too low than supposedly “too high” (the thresholds for which were not established scientifically but instead arbitrarily). It’s up to us to take the “auroch by the horns,” as it were, and reclaim our own (what I like to call) “Primal Birthright.”

Looking Back to the Work of Weston A. Price

Weston A. Price really laid a lot of groundwork for our understanding of traditional diets and what happened once we abandoned those. The photographs he brought back from his investigative travels tell the story in a way words never could. We all owe a debt of immeasurable gratitude to him for what he left us.

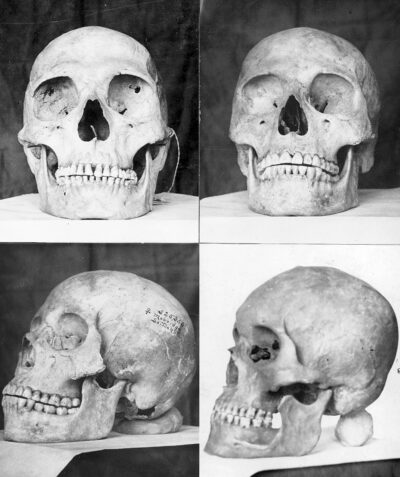

Just look at these ancient skulls that he depicted in his book Nutrition and Physical Degeneration. The sheer uniform perfection of the skull morphology kind of leaves you breathless. Interestingly, most any paleoanthropologist worth their salt can look at a set of human remains and tell you whether it belongs to a pre-agricultural or post-agricultural human – strictly based on the health of that skeleton, its robustness, its bone density, and its uniform morphological perfection (or lack of). Post-agricultural skeletons also show clear signs of mineral deficiencies, structural abnormalities, and dental disease and malocclusions.

In his travels to isolated and traditional cultures around the world during the 1930s and 1940s, Price observed a huge variety of traditional diets that seemed to result in good to excellent health. So what was the most popular take-away message everyone seemed to get from all this?

“Just eat real food.”

But is this really the bottom-line message that we need to take home with us? Certainly, it isn’t the whole story. There is no rational basis for the assumption that just because a food is supposedly natural or “real,” it is anywhere near optimizing for your health or even necessarily good for you.

So, in the spirit of evolutionary thinking, I’d like to pose the following, all too frequently overlooked, question. In fact, it is basically the same question Price asked: What did the seemingly diverse diets of ostensibly optimally healthy groups of people all have in common?

1) All the healthy cultures Price studied ate as many animal-source foods as were available to them. He was never able to find a single vegan culture anywhere (and he did look – and was actually disappointed that he wasn’t able to find any).

2) All healthy cultures held fat-rich foods in the highest esteem of all! The most sought after, venerated, and sacred of foods in every optimally healthy culture Price studied were those highest in fat (usually animal fat) and fat-soluble nutrients.

Therein lies an important – and I believe key – foundational message. In fact, from these core principles derived from the work of Weston Price, we have the foundational formula for the health of every human being.

As humans, we are much more alike than we are unalike. What defines us as a species is not our differences but those things that we have in common. We all share the same type of unique brain and require the same ranges of macronutrients. We all need complete, animal-source protein, along with a significant amount and variety of quality dietary fats, critical fat-soluble nutrients, and essential fatty acids in order to be optimally healthy. And there is no one for whom dietary carbohydrates are essential. We may vary in terms of nuances and polymorphisms, but these do not change the solidly established foundations we have in common.

In light of this, let’s revisit Price’s traditional diets and distill them down to the most simple diet that met every single criterion essential for optimal health – that of the Inuit. This is really the closest we come to the essential basics: a mostly fat-based diet from animal source foods.

Among the many groups Price studied, few impressed him more than the Inuit. They had the lowest rate of dental disease of any culture he studied, and they exhibited extraordinary physical robustness, while also demonstrating exceptional disposition and character.

Whether an individual today is going to well tolerate a more varied, broadly inclusive diet depends on 1) the degree to which their foundational health is in order; 2) the extent of their genetic and other health compromises; 3) their immune tolerance of various (mostly plant-based) foods.

So if we want to optimize this foundational dietary framework for ourselves, what might we do? We might add – in such a way that is not compromising for us – foods that can augment our intake of potentially beneficial compounds and help us to meet the challenges of our environmentally health-hostile world. These foods could include fibrous (nonstarchy) vegetables and greens, along with things like avocados, olives, coconut, garlic, turmeric and other herbs, mushrooms, cultured vegetables, and kvass, to provide variety, bulk, extra vitamins, beneficial phytochemicals, antioxidants, and a little additional help with detoxification. We might even eat a few berries. Many berries help combat inflammation and help us maintain our gastrointestinal microbiome by supplying natural prebiotics.

Keep in mind that not even these generally beneficial plant foods are truly “essential” to us, though. But that doesn’t mean we can’t or shouldn’t benefit from and enjoy them, as long as we tolerate them well.

We no longer live in Weston Price’s world, much less the world of our prehistoric ancestors. However, the two key foundational principles derived from Price’s work are more critical for us today than ever before. We need to be sure that whatever we include in our diets supports those principles.

If your goal is optimal health, the bottom line is obtaining quality nutrition from foods sourced from animals that foraged for their natural diets. Maintain a moderate protein intake, while leaving out sugars and starches. Eat as much fat as you need from a variety of quality natural sources in order to satisfy your appetite – but there is no need to add so much to each meal that your food is floating in it. Your body’s leptin and ghrelin hormones will let you know when you have eaten enough fat. And there’s no need to go pure “carnivore.” Use the vegetables to help fill you with bulk and provide a variety of bonus nutrients.

So what’s the moral of this story?

“Fat is where it’s at!”

Nora Gedgaudas, CNS, FNTP, BCHN, is an experienced neurofeedback clinician, nutritional consultant, author, speaker, and educator on the subject of human health/nutrition. She was the first within the genre to promote an ancestrally oriented, fat-based ketogenic approach to eating. Nora is the author of Primal Body, Primal Mind; Rethinking Fatigue; and Primal Fat Burner. She proudly serves on the board of Price-Pottenger. For more information, visit primalbody-primalmind.com and primalcourses.com.

REFERENCES

Cahill GF Jr, Veech RL. Ketoacids? Good medicine? Trans Am Clin Climatol Assoc. 2003;114:149-61. PMID: 12813917.

Hawks J. Selection for smaller brains in Holocene human evolution. 2011. https://doi.org/10.48550/arXiv.1102.5604.

Ben-Dor M, Gopher A, Hershkovitz I, Barkai R. Man the fat hunter: the demise of Homo erectus and the emergence of a new hominin lineage in the Middle Pleistocene (ca. 400 kyr) Levant. PLOS ONE. 2011;6(12): e28689. https://doi.org/10.1371/journal.pone.0028689.

Snodgrass JJ, Leonard WR, Robertson ML. The energetics of encephalization in early hominids. 2009. Page 13. http://www.pinniped.net/Snodgrassenergetics2009.pdf.

Cordain L. Ancestral fire production: implications for contemporary ‘paleo’ diets. 2014 Apr 20. doi:10.13140/RG.2.2.14793.60006.

Roberts RO, Roberts LA, Geda YE, et al. Relative intake of macronutrients impacts risk of mild cognitive impairment or dementia. J Alzheimers Dis. 2012 Jan 1;32(2):329-39. doi: 10.3233/JAD-2012-120862.

Mozaffarian D, Ludwig DS. The 2015 US dietary guidelines: lifting the ban on total dietary fat. JAMA. 2015;313(24):2421-22. doi:10.1001/jama.2015.5941.

Price WA. Nutrition and Physical Degeneration. Eighth edition. Lemon Grove, CA: Price-Pottenger, 2022.

Published in the Journal of Health and Healing™

Winter 2023-24 | Volume 47, Number 4

Copyright © 2024 Price-Pottenger Nutrition Foundation, Inc.®

All Rights Reserved Worldwide